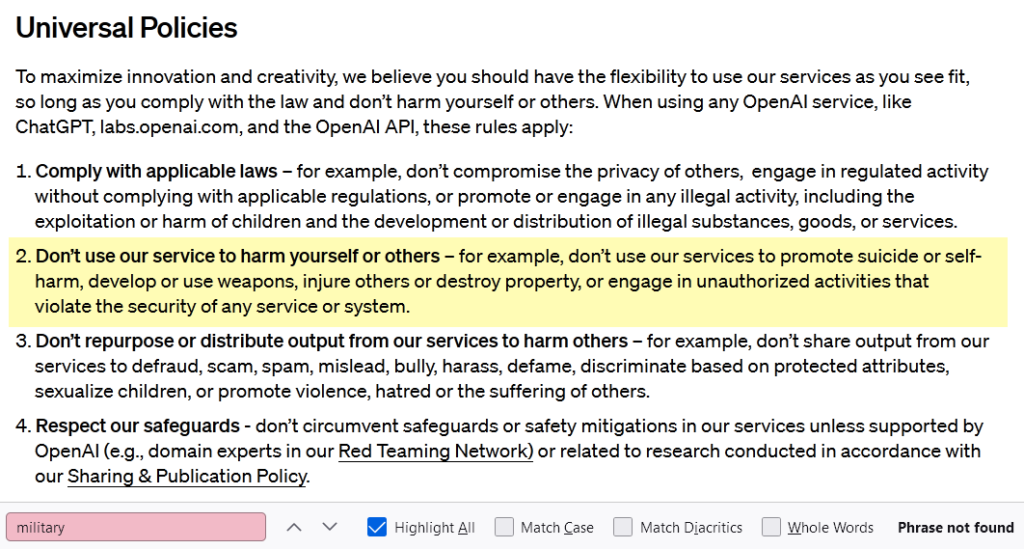

OpenAI has rewritten its rules to allow the use of AI in military and warfare applications. The policy shift, announced recently, signals a strategic shift in the company’s approach. “We aimed to create a set of universal principles that are both easy to remember and apply,” stated an OpenAI spokesperson, emphasizing the broad applicability of the principle ‘Don’t harm others’ in various contexts. This decision, while unlocking AI’s potential, also raises questions about the future landscape of technology in military use.

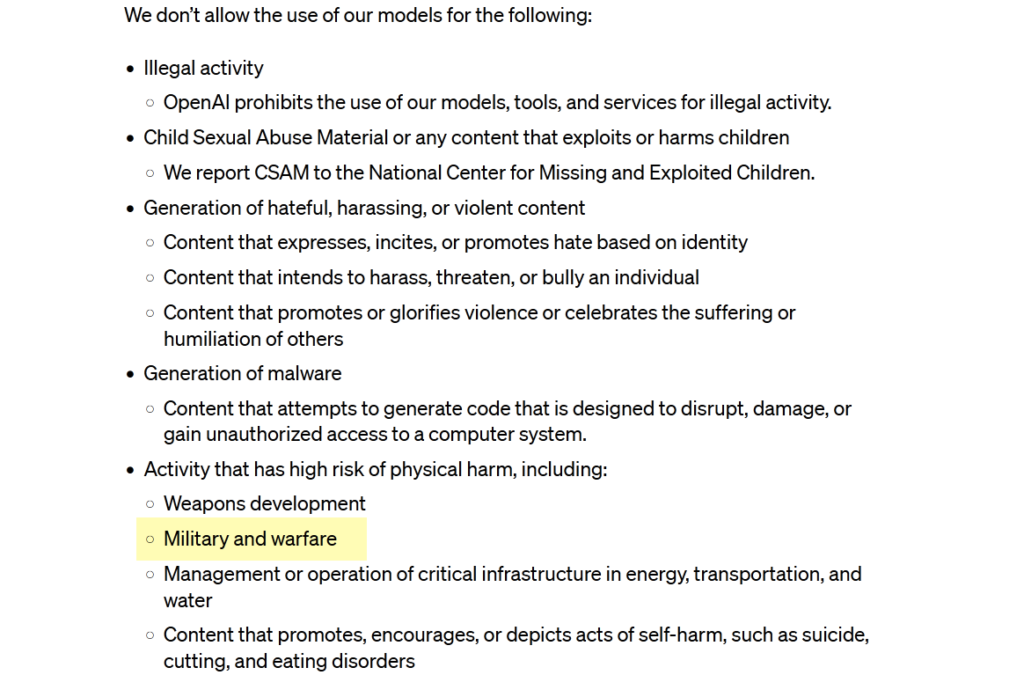

Until a few days ago, OpenAI’s rules said a clear ‘no’ to using its technology for anything related to “military and warfare.” But things have changed. The latest update to the rules, as reported by The Intercept, removed these words, showing a change in OpenAI’s approach. While the new rules still tell people not to use OpenAI’s services for making weapons, the absence of the words “military and warfare” suggests that OpenAI is now more open to working with military organizations.

What does this mean in real life? Well, that’s something we’ll have to wait and see. The timing is interesting, considering the growing use of AI in military operations worldwide. Sarah Myers West from the AI Now Institute highlighted this, saying, “With AI systems being used to target civilians in places like Gaza, it’s a significant moment to decide to remove ‘military and warfare’ from OpenAI’s allowed use policy.”

Another thing to note is that Open AI is acknowledging that its technology can be used for more than just traditional warfare. While OpenAI doesn’t currently have a product that directly hurts people, The Intercept suggests its technology could be used for tasks indirectly linked to harm.

In a big policy change, Open AI, led by Sam Altman, is not only saying ‘okay’ to military use but is openly welcoming the use of its AI technologies for military and warfare. TechCrunch points out that while OpenAI is allowing tasks related to the military, it’s still keeping a ban on using AI for making weapons. This shows a careful balancing act between supporting military-related work and preventing the creation of weapons.

In an unexpected update to its usage policy, Open AI is making it clear that it’s open to military applications of its technologies. By removing the language saying you can’t use its products for “military and warfare,” as seen by The Intercept, OpenAI is sending a message that it’s willing to work with the military. The company’s statement talks about creating universal principles that are easy to apply, with ‘Don’t harm others’ being a broad and easily understood rule. But removing “military and warfare” from the list of things you can’t do suggests a willingness to work with military customers.

Read Also: NYT Strikes Back: Predicting the Fallout for Microsoft, OpenAI

OpenAI’s platforms, like ChatGPT, could now find uses beyond traditional warfare. They could help army engineers summarize lots of documents about a region’s infrastructure, for example. But for Open AI, there’s a challenge in figuring out how to work with government and military funds, given the different roles the military plays in research, investment, and supporting infrastructure.

This change opens up new business opportunities for Open AI. It allows the company to explore collaborations with government entities beyond just traditional warfare. However, it also brings up important ethical questions about using AI in the military, starting a bigger conversation about how we should responsibly use advanced technologies.

OpenAI’s decision to rewrite its rules and allow AI use in the military and warfare reflects how AI and global security are changing. This move, while opening up exciting possibilities, also brings up questions about the ethical boundaries of using AI in military situations. As technology keeps advancing, Open AI’s decision sets the stage for ongoing discussions about how we should responsibly use AI and what it means for society as a whole.

Read More: S24 Showdown: Samsung Galaxy S24 Ultra vs S24 – Which Steals the Spotlight?