ChatGPT will digitally tag images generated by DALL-E 3 to combat misinformation, unveiling a transformative approach in the battle against fake content. By incorporating provenance metadata, users can verify the authenticity of AI-generated images, empowering them to discern fact from fiction online. This innovative solution marks a significant step forward in the fight against misinformation, providing users with the tools they need to navigate the digital landscape with confidence.

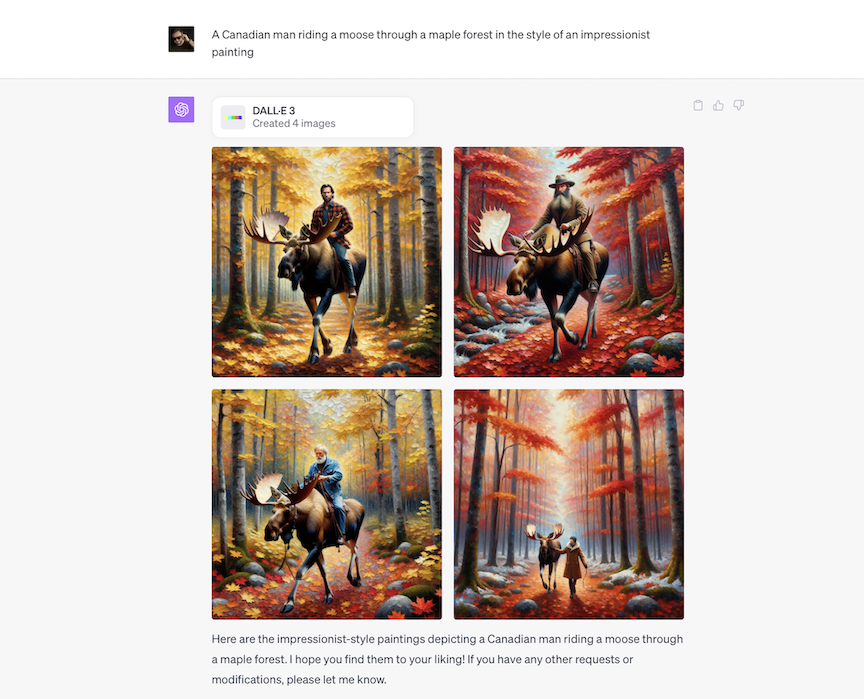

In today’s world, where fake news and misinformation run rampant online, tech companies are stepping up to the plate to combat the spread of false information. One such company, OpenAI, has announced an exciting new initiative to tackle this problem head-on. They’ve teamed up with DALL-E 3 to digitally tag images, aiming to help users verify if what they’re seeing is real or fake.

This partnership between ChatGPT and DALL-E 3 is a big deal. It means that when you see an image online, especially one created by AI, you can now check to see if it’s legit or not. The idea is simple but powerful: by adding special tags to these images, users can trace where they came from and whether they’ve been tampered with.

The reason behind this move is clear. With the rise of AI technology, it’s become easier than ever to create convincing fake images. These images can be used to spread false information, manipulate opinions, and even deceive people. By providing users with a way to verify the authenticity of these images, ChatGPT and DALL-E 3 are giving them the tools they need to navigate the digital world more safely.

How does it work? It’s all about something called provenance metadata. This fancy term simply means information about where an image came from and how it was created. By adding this metadata to AI-generated images, ChatGPT and DALL-E 3 are essentially leaving a digital trail that users can follow. This trail allows them to see if the image has been altered or manipulated in any way.

Read Also: YouTube TV Introduces 1080p for better video quality!

As with any new technology, there are some limitations to consider. For one, this verification method only works if the metadata is intact. If someone removes or alters the metadata, the whole system falls apart. Additionally, this strategy only applies to still images for now. Videos and other types of content are not yet covered by this initiative.

Despite these limitations, the potential benefits are enormous. By giving users the ability to verify the authenticity of AI-generated images, ChatGPT and DALL-E 3 are empowering them to make more informed decisions online. Instead of blindly trusting what they see, users can now take a closer look and decide for themselves whether something is true or false.

ChatGPT and DALL-E 3 aren’t the only ones working on this problem. Other tech giants like Google and Meta are also exploring ways to combat misinformation. Google’s DeepMind, for example, has developed a system called SynthID, which digitally watermark images and audio created by AI. Meanwhile, Meta is testing invisible watermarking technology to protect against image manipulation.

The collaboration between ChatGPT and DALL-E 3 to digitally tag images is a significant step forward in the fight against misinformation. By providing users with the tools they need to verify the authenticity of AI-generated content, this initiative has the potential to make the internet a safer and more trustworthy place for everyone. As technology continues to evolve, it’s essential that we remain vigilant and proactive in addressing the challenges posed by misinformation, ensuring a more transparent and honest online environment for all.

Read Also: iPhone 16 schematics corroborate rumors about Vertical Rear Cameras!